When we released the beta version of the EaaSI software on March 5, our hope was to learn from putting the software in action. Yes, we wanted to identify bugs and errors in the system, but we also wanted to uncover details, both practical and conceptual, to address in future phases of development and deployment. After working on the software internally for fifteen months, it was time for fresh eyes and unbiased opinions.

The Test Process

We knew that we couldn’t just release the code, cross our fingers, and hope for the best. So, we’ve provided a structured process for each node to test the software, report bugs, and provide feedback. Once each partner completed deployment of their EaaSI instance (see Mark Suhovecky’s post for more on the EaaSI deployment process), they were asked to complete a set of test cases focused on core functions of the EaaSI beta. Test cases were designed to make sure the system functioned as planned, but were also an opportunity to guide node users through our understanding of typical workflows in the EaaSI system, including:

- Synchronization and replication from other Network nodes

- Importing software installation materials and digital objects

- Installing software in and adding digital objects to emulation environments

- Publishing environments for retrieval by other Network nodes

- Editing environment metadata and deleting environments from local storage

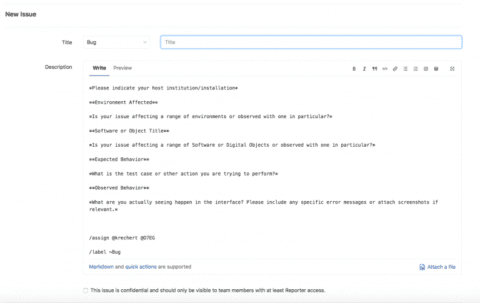

Each test case included “Expected Outcomes” to help testers determine whether the software was operating properly and question prompts to solicit feedback. Using supplied sample data (including classic applications like Microsoft Golf and Encarta), testers worked their way through these test scenarios, recording outcomes and detailing the user experience of each. Bugs were reported through the EaaSI Tech Talk user group message board or in our bug reporting template on GitLab.

Klaus and Oleg have responded admirably to the various error reports and questions that have come up through testing. As with deployment, the idiosyncrasies of each node mean that new errors or challenges do arise often, but every new wrinkle is an opportunity to improve.

What We’ve Learned

By keeping the scope of our test cases relatively narrow, we were able to focus our error reporting in a few key areas. We anticipated success in the mature areas of the system—importing software, creating environments, etc.—that were already part of the EaaS framework. Those tests were indeed largely successful, but the added features of synchronization and replication within the decentralized EaaSI Network raised new challenges: sharing specific versions of the emulation software underlying EaaS, coordinating server configuration between discrete EaaSI installations, moving storage and processing to the cloud, and more, all of which are being addressed as a result.

The test process is also revealing many potential updates needed to the overall user experience and business logic of the system. Since the current UI is meant for demonstration of back-end functionality and not for production use, these issues were expected but also a desired outcome. The feedback from the testers drives home the need for guidance and communication through the interface so users of the EaaSI system know what to do and what happens when they do it. This is why testing has been so beneficial to the Project Team. We’re used to the system as currently designed and take for granted many of the little things we’ve learned to work around. As we begin to work on updates to the front-end, it’s helpful to be reminded of this point.

What’s Next

We haven’t placed any restrictions on the nodes’ use of the system following testing and we encourage them to continue creating and sharing environments (with the caveat that this remains a beta period and data may not be carried over into production). This will hopefully stress test the system a bit and we will start to see just what happens when nodes are regularly publishing, synchronizing, and replicating data. Testing has already revealed conceptual underpinnings that we need to further define and build into the system functionality, such as “What to do with duplicate resources?” and “Who can edit metadata of shared environments?” This aligns with our focus on the front end in the coming months as we grapple with the system’s overall user experience and how best to structure and manage its operation.

Stakeholder input is key to creating a system that is responsive to user needs and enjoyable to use. As we continue to expand the system, we intend to continue routine testing with our node partners, but also expand to other groups to ensure a broad spectrum of feedback. We’re thrilled with what we’ve learned from testing so far and hope others will take the time to help us improve the EaaSI system.

Thanks as always to our Node Hosts/Partners for their participation and patience as the system goes through growing pains.